Prompt Engineering Is Software Engineering: Building merchi.ai Chapter 4

Moving Beyond the “Chatbot” Fallacy

If you browse LinkedIn or Twitter in 2026, you will find endless “cheat sheets” for better prompting. Most of this content treats prompt engineering as a form of creative writing, a way to “sweet-talk” an LLM into giving a better answer. At merchi.ai, we view this as the “Chatbot Fallacy.” When you are building an enterprise-grade merchandising engine that processes millions of products daily, prompts cannot be static strings or clever “hacks” hidden in a codebase. They must be treated as first-class software assets.

In the high-stakes world of automated merchandising, the output of a prompt defines the digital storefront of a multi-billion-pound brand. If the prompt fails to respect a luxury brand’s tone of voice, or if it hallucinates technical attributes for a piece of industrial machinery, the business impact is immediate and measurable. We realised very early in our journey as small team that the only way to manage this complexity was to bring the discipline of software engineering to our prompt management. This meant version control, automated testing, per-tenant configuration, and a robust templating system.

The transition from “writing prompts” to “architecting prompt systems” is what allows merchi.ai to serve a luxury fashion house and a hardware wholesaler using the exact same infrastructure. We don’t write different code for different customers; we assemble different prompts at runtime. This chapter is a deep dive into the merchi.ai prompt management system. An architecture designed to handle the non-deterministic nature of AI with the deterministic rigour of a production PIM.

The Case for Database-Driven Prompt Management

The first decision we made was to move prompts out of the codebase and into a dedicated prompt table within our Supabase database. Hardcoding prompts is a recipe for disaster. If you want to tweak the way merchi.ai handles SEO metadata for Italian translations, you shouldn’t have to wait for a full Vercel deployment. By storing prompts in the database, we can update the “intelligence” of our platform in milliseconds without touching a single line of application code.

Our database schema isn’t just a simple text column. Each prompt record includes a version history, a unique identifier for the specific merchandising task (e.g., extract_attributes, generate_copy, alt_text), and a tenant-specific override. This allows us to provide a “Global Default” prompt for all users while giving our enterprise clients the ability to customise every single instruction to fit their specific internal workflows. We manage this through a custom-built Admin UI at /admin/prompt-manager.

In this admin interface, we can view a “diff” between versions, much like a GitHub pull request. This is essential for debugging. If a customer reports that their product descriptions have suddenly become too verbose, we can trace back the exact version of the prompt that was used for those generations and roll it back to a previous state. This provides a level of accountability and traceability that is missing from almost every “AI-wrapper” on the market today.

Runtime Assembly and the Template Variable System

At merchi.ai, a prompt is never a static string; it is a structured template that assembles at runtime. We use a double-curly-brace syntax to define template variables that are populated just-in-time by our Trigger.dev workers. We call this our “Merchi Scripting Language” (MSL). When a worker initiates a generation task, it fetches the appropriate template and injects dynamic context based on the specific product and tenant.

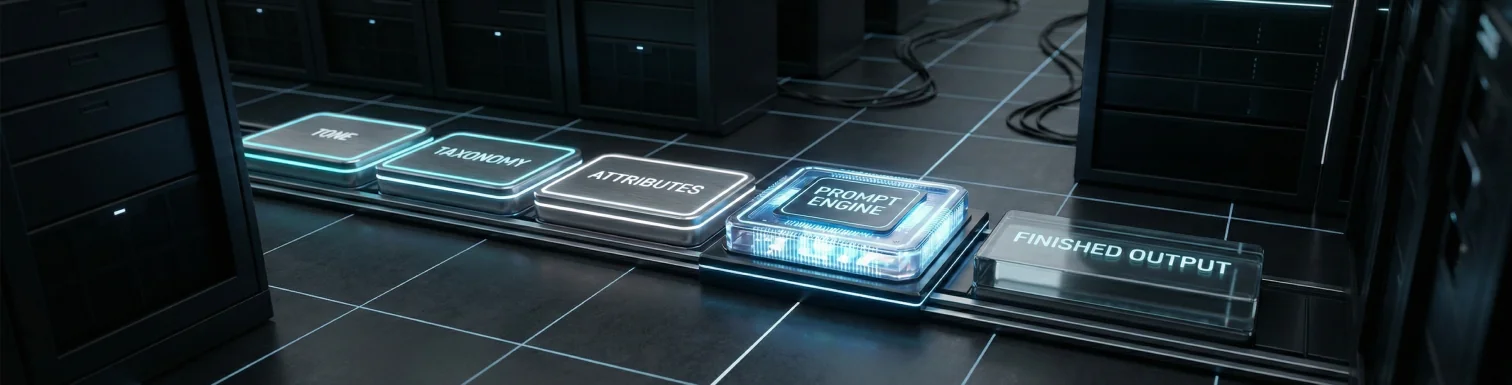

A typical production prompt in merchi.ai assembles from several distinct modules:

- The Writing Knowledge: This is the most critical block. It contains the tenant’s brand tone of voice, their specific industry taxonomy, and their required attributes.

- The Schema Configuration: This tells the AI exactly what content blocks to generate; whether it’s a 200-word description, a list of bullet points, or specific SEO tags.

- The Technical Constraints: This includes “Stop Words” (terms the AI is forbidden from using) and character limits for specific locales.

- The Input Data: The raw product data, which can include

{{ASSETS}}(image URLs for vision tasks), existing metadata from a CSV, or raw HTML from a web scraping job.

We also implemented a .rest suffix pattern for our variable system. Because LLMs have a finite context window, we want to avoid cluttering the prompt with irrelevant data. Our parser tracks which properties of the product object have been explicitly used in the prompt. Any remaining properties are grouped into {{inputData.rest}}, which we can choose to omit or include as supplementary context depending on the task’s requirements. This ensures the model’s “attention” is always focused on the most relevant information.

Per-Tenant Logic: Luxury Fashion vs. Industrial Hardware

The reason we prioritised per-tenant prompts is that the “correct” output for one merchant is a “bug” for another. Consider the difference between a luxury fashion brand and a hardware store. The fashion brand requires evocative, emotive language that sells a “lifestyle,” focusing on materials like “sustainably sourced silk” and styling advice for a “summer soirée.” In contrast, the hardware store needs clinical, technical accuracy, focusing on chuck sizes, torque ratings, and material durability.

Using our per-tenant configuration, both of these merchants use the same generate_copy task. However, the runtime assembly pulls radically different instructions. For the fashion brand, the {{brandVoice}} variable might inject instructions to “use a sophisticated, understated tone and avoid all technical jargon.” For the hardware store, it might inject instructions to “prioritise specifications and use a direct, authoritative tone.”

This flexibility extends to the Taxonomy and Attributes system. If a merchant specialises in technical hardware, their prompt will include instructions to look for specific attributes like “Input Voltage” or “Max Pressure.” If they sell clothing, those attributes are discarded in favour of “Fit,” “Neckline,” and “Care Instructions.” This per-tenant customisation happens at the database level, meaning the merchi.ai core engine remains lean and scalable while providing a bespoke experience for every user.

Writing Knowledge: The Structured Soul of a Brand

The most innovative part of the merchi.ai engine is what we call “Writing Knowledge.” This is a structured object that feeds into every generation as a massive context block. It is the “source of truth” for how a brand should be represented. It isn’t just a list of instructions; it is a comprehensive guide to the brand’s unique identity.

Writing Knowledge consists of four key pillars and is completely extensible:

- Brand Tone of Voice: A detailed description of the brand’s personality, vocabulary preferences, and target audience.

- Taxonomy: The hierarchical category tree that dictates how products should be classified.

- Attributes Configuration: The specific data points that must be extracted or generated for every category.

- Stop Words: A list of forbidden terms - essential for brands that want to avoid industry clichés or specific competitor-associated language.

By treating Writing Knowledge as a structured context block, we ensure that the AI isn’t just “guessing” how to write. We are giving it a map and a compass. This significantly reduces the non-deterministic nature of LLMs. When the model knows exactly what attributes it is looking for and what tone it must adopt, the consistency of the output improves by orders of magnitude. This is how we achieve the level of accuracy required to replace 125 years of human work in a single day.

The Challenge of Prompt Regression

One of the hardest parts of treating prompts as code is regression testing. In traditional software engineering, a change to a function either works or it doesn’t. In prompt engineering, a change to an English sentence can have subtle, non-deterministic effects. Improving the Japanese translation for technical specs might accidentally degrade the brand tone for French fashion descriptions.

To mitigate this, we are building out an automated “Prompt Sandbox” in our admin UI. This allows us to run the same prompt version against a “Gold Standard” dataset; a collection of 100 products where we have already manually verified the perfect output. Before a new prompt version is deployed to a production tenant, we can compare the new AI output against the gold standard using another LLM as an evaluator. This isn’t a perfect system, but it provides a “sanity check” that catches major regressions before they reach the customer.

This is the “Black Box” problem of 2026. Because we cannot see the weights of the model, we must treat it as a probabilistic engine. By wrapping it in the deterministic structures of software engineering - versioning, registry, and testing, we can harness the power of AI without the chaos that usually accompanies it. We are moving toward a future where “prompt bugs” are handled with the same discipline as “code bugs.”

Conclusion: Engineering the Future of Content

Treating prompt engineering as software engineering is what allows merchi.ai to operate at enterprise scale. We have moved beyond the “one-liner” prompts to a sophisticated system of runtime assembly and database-driven configuration. This architecture ensures that every merchant on our platform, from the smallest boutique to the largest wholesaler, receives high-quality, brand-aligned content that actually converts.

In the next chapter, we will shift from the “what” to the “how.” We will dive into our Automation Logic and the technical details of our Trigger.dev worker architecture. We’ll show you how we orchestrate the massive ingestion of API payloads, ZIP files, CSVs, and web-scraped data to feed this prompt engine at a scale that can truly transform retail operations.

Ready to move from manual prompting to engineered automation? Book a Demo or Start Automating with merchi.ai.