Why We Never Call LLMs Directly: The Anti-Fragility of the merchi.ai Gateway Pattern

The Anti-Fragility Mandate in 2026

In the fast-moving landscape of 2026, the digital commerce sector has moved beyond simple storefronts and entered the era of “Agentic E-commerce.” In this new reality, high-quality product data is no longer just a requirement for SEO; it is the fundamental fuel for autonomous shopping agents that make purchasing decisions on behalf of consumers. To thrive in this environment, a platform like merchi.ai must be capable of processing over a million products per day with surgical precision. However, there is a massive technical hurdle that most builders ignore: the extreme volatility and rapid deprecation cycles of the AI model market.

As a small team building merchi.ai, we realised very early on that relying on a single AI provider whether it be OpenAI, Google, or Anthropic, was a significant strategic error. In 2026, the technological half-life of a “State-of-the-Art” (SOTA) model is roughly four months. A model that dominates the benchmarks for image-to-attribute extraction in January is often deprecated or outclassed by a more efficient, cheaper alternative by April. If your core business logic is hardcoded to a specific provider’s SDK, you aren’t building a sustainable platform; you’re building a ticking time bomb that will eventually explode in a cloud of “404 Not Found” or “Model Deprecated” errors.

Anti-fragility is the core philosophy behind merchi.ai. We don’t just want our system to survive model changes; we want it to get better because of them. This required us to rethink the very way our application “talks” to AI. We needed a system where specific models are treated as hot-swappable commodities rather than permanent infrastructure. This realisation led to our most important architectural rule: we never call an AI provider directly. By decoupling our merchandising logic from the underlying model providers, we have ensured that merchi.ai remains agile and resilient in a world where today’s leader is tomorrow’s legacy.

Whether we are dealing with multi-language generation for a global fashion brand or technical attribute extraction for an industrial hardware supplier, our engine remains consistent. We have turned model volatility from a threat into our greatest competitive advantage. This approach allows us to adopt the latest breakthroughs the hour they are released, ensuring our customers always benefit from the highest quality output at the lowest possible cost. In the context of 2026’s “Zero-Click Commerce,” where data accuracy is the only thing that matters to a shopping bot, this anti-fragile stance is what separates professional infrastructure from temporary wrappers.

The OpenRouter Gateway: One API to Rule Them All

To manage the complexity of the 2026 model landscape, we adopted the OpenRouter gateway pattern. OpenRouter acts as a unified abstraction layer, providing us with access to virtually every major model including GPT-5, Claude 4, and Gemini 3, through a single, OpenAI-compatible API. For a small team, this reduction in complexity is a massive force multiplier. Instead of managing a dozen different SDKs, rate limits, and billing accounts, we manage exactly one key and one consistent interface across our entire platform.

The technical implementation of this gateway is the heartbeat of our automation engine. By pointing our OpenAI client to a custom baseURL, we gain the ability to route traffic to any model in existence without changing a single line of business logic. This is particularly vital when our system is handling thousands of concurrent requests from bulk ZIP or CSV uploads. We need a consistent interface that doesn’t break when a provider decides to update their API version or change their response headers. Our code remains clean, focused, and entirely dedicated to the logic of merchandising rather than the plumbing of API integrations.

import OpenAI from 'openai'

/**

* The merchi.ai unified AI client.

* We route everything through OpenRouter to ensure we can swap

* providers without a code deploy.

*/

const openai = new OpenAI({

baseURL: '[https://openrouter.ai/api/v1](https://openrouter.ai/api/v1)',

apiKey: process.env.OPENROUTER_API_KEY,

defaultHeaders: {

'HTTP-Referer': '[https://merchi.ai](https://merchi.ai)',

'X-Title': 'merchi.ai Production Engine',

}

})

/**

* Core execution logic.

* Notice how the model ID is dynamic, fetched from our registry.

*/

async function processMerchandisingTask(tenantId: string, taskType: 'vision' | 'copy') {

const config = await getModelConfig(tenantId, taskType)

const response = await openai.chat.completions.create({

model: config.modelId, // e.g., 'google/gemini-pro-1.5'

temperature: config.temperature,

messages: [

{ role: 'system', content: config.systemPrompt },

{ role: 'user', content: 'Extract attributes for this product...' }

],

})

return response.choices[0].message.content

}

Using this pattern, we can treat “intelligence” as a utility, much like electricity or water. If one provider experiences a regional outage; a common occurrence even in 2026 as demand for compute surges, our system doesn’t crash. We simply re-route the traffic. This level of provider redundancy is essential for enterprise-grade merchandising. When a retailer is preparing for a major seasonal launch, they cannot afford for their product data pipeline to be offline because a single AI lab in San Francisco is having a bad day. The gateway acts as our high-availability load balancer for intelligence, ensuring that the work of our customers is never held hostage by the technical difficulties of a third party.

Furthermore, the gateway allows us to experiment with niche, task-specific models that wouldn’t justify a full integration on their own. For instance, we might use a highly specialised, small-parameter model for simple alt-tag generation while reserving the “heavy hitters” like Claude for culturally nuanced styling advice. This granular control over our AI resources is what allows merchi.ai to maintain a superior price-to-performance ratio compared to “model-locked” competitors. It ensures that our multi-tenant data handling remains efficient and cost-effective as we scale toward our goal of processing a million products daily. We are not just using the most popular models; we are using the correct models for every micro-step of the merchandising process.

This abstraction also simplifies our security posture. By centralising our AI access through a single gateway, we can implement more rigorous monitoring and auditing of our token usage and data flow. In a multi-tenant SaaS environment, knowing exactly where data is going and which models are processing it is a core requirement for compliance and trust. Our gateway pattern provides a transparent audit trail, allowing us to assure our users that their proprietary Writing Knowledge and brand assets are being handled with the highest level of technical integrity. It turns a chaotic web of API integrations into a single, observable stream.

The Model Registry: Decoupling Intelligence from Code

The true “brain” of our architecture isn’t the AI models themselves; it is our proprietary Model Registry, stored within our Supabase PostgreSQL database. This registry allows us to manage our entire AI infrastructure as data, not code. In most AI applications, the model choice is hardcoded as a constant. At merchi.ai, the model choice is a dynamic query resolved at runtime based on the specific merchandising task. This means we can change the intelligence profile of any feature in real-time without a single code deployment or pull request. This level of decoupling is what allows a small team to maintain a platform with the complexity of a much larger engineering organisation.

This database-driven approach is a game-changer for a small team. If we discover that a new model from Google performs better at extracting attributes from technical drawings than our current default, we don’t need to trigger a Vercel build. We simply update a row in our model_registry table. This “hot-swap” capability allows us to be incredibly responsive to the rapid shifts in model quality that define 2026. It eliminates the friction of traditional deployment cycles and puts the power of model selection in the hands of the strategy, not just the code. We can benchmark, test, and deploy a new model for a specific task in minutes rather than hours or days.

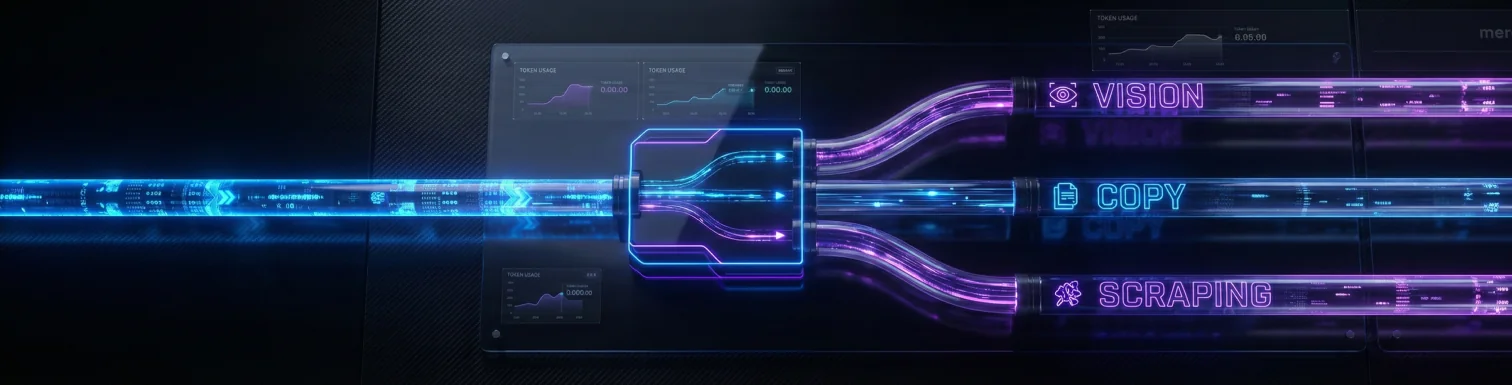

Our registry is organised around specific “Task Types,” ensuring that the right tool is always used for the job. We have identified several core intelligence needs that power merchi.ai:

- Vision Tasks: Optimised for heavy lifting /extracting detailed data from high-resolution product images.

- Copywriting Tasks: Prioritised for brand-voice alignment and adherence to our Writing Knowledge configuration.

- Taxonomy Tasks: High-logic models that map messy raw data to clean, structured category trees.

- Scraping Tasks: Models specifically tuned to navigate and extract structured data from manufacturer websites.

- Onboarding Tasks: Low-latency models designed to guide users through the initial merchi.ai setup and configuration.

By categorising our AI needs this way, we can fine-tune parameters like temperature and top-p on a per-task basis within the database. A taxonomy task needs zero “creativity” it must be rigid, accurate, and follow the strict rules of the brand’s data structure. A styling advice task needs flair, creative associations, and brand-aligned personality. By managing these settings in the database, we ensure that every product processed by merchi.ai meets the specific needs of the merchant, whether they are selling high-fashion silk scarves or industrial drill bits. It allows us to maintain a consistent brand voice while ensuring technical attributes are never hallucinated.

Moreover, this registry enables per-tenant model overrides using Supabase Row Level Security (RLS). If a specific enterprise client has a legal mandate to only use models with high data-residency guarantees in the UK or EU, we can accommodate them instantly. We simply assign their tenant_id a specific set of models in the registry. This level of flexibility is one of the many reasons merchi.ai is becoming the preferred choice for corporate legal teams who require both innovation and strict compliance. It turns the “black box” of AI into a transparent, configurable tool that respects corporate governance and international data laws. We aren’t just giving them AI; we’re giving them a controlled, auditable AI environment.

The Fallback Chain: Engineering 99.9% Reliable AI Workflows

In a production environment processing a million products per day, failure is not a possibility, it is a certainty. API rate limits, transient network errors, and model hallucinations are facts of life in 2026. To handle this, merchi.ai employs a sophisticated Fallback Chain. We never rely on a single request succeeding on the first try. Instead, we have architected a tiered system of resilience that ensures the data pipeline never stops moving, no matter how volatile the external providers become. This is the difference between a prototype and an enterprise-grade merchandising engine.

When the merchi.ai engine initiates a task, such as generating multi-language descriptions or extracting SEO metadata, it follows a strictly defined hierarchy of model resolution. This logic is orchestrated by Trigger.dev, which manages the asynchronous retries and job states. If the primary model for a task fails, the system doesn’t return an error to the user; it silently moves down the chain to the next available intelligence tier. This ensuring that the merchandising workflow remains uninterrupted even during provider downtime or unexpected model deprecations. Our users see “Completed,” while the system might have fought through three different providers behind the scenes to get there.

Our fallback logic follows a robust five-tier path to success:

- Tier 1 (Task-Specific Override): The “SOTA” model currently assigned to that micro-task (e.g., Gemini Flash for high-res vision).

- Tier 2 (Tenant Preference): A model explicitly requested or vetted by the customer for their brand’s specific needs.

- Tier 3 (Global Default): Our thoroughly tested, stable workhorse model for that category (e.g., Claude 3.7).

- Tier 4 (Environment Variable): A “fail-safe” model defined in our Vercel environment for emergency manual re-routing.

- Tier 5 (Hardcoded Fallback): An older, highly reliable model used as a last resort to prevent total system failure.

This chain ensures that merchi.ai provides near-perfect uptime. If our vision-specialised model is under heavy load or hit by a rate limit, the system might automatically fallback to a slightly more expensive but available generalist model to finish the job. This ensures that when a merchant uploads a CSV with 50,000 rows on a Monday morning, they aren’t met with a “Failed” status on Tuesday. The system works through the chaos, prioritising successful output over all else. It is the architectural equivalent of a self-healing network, designed to mitigate the inherent unreliability of external AI APIs.

This resilience is particularly important for our Multi-language generation feature. Different models have varying strengths across the eight languages we support. Our fallback chain allows us to route Japanese translation tasks to models with the highest cultural nuance, while falling back to more robust generalists if the primary choice is unavailable. This ensures that the final output, whether in English, French, or Japanese is always professional, brand-aligned, and accurate to the original product attributes. We are not just generating text; we are generating trust across borders, ensuring that a brand’s voice remains coherent in every market they serve.

The Economics of Intelligence: Tracking Every Token

One of the hidden dangers of the “AI boom” for retailers is the unpredictable nature of token costs. When you are automating merchandising at scale, small differences in input and output token pricing can lead to massive variances in monthly overhead. Because every request in merchi.ai flows through our unified gateway, we have achieved total Economic Observability. We track every single token, identifying exactly which model was used, which task it performed, and which tenant it belongs to. This level of detail is necessary when your mission is to provide 1,000,000 products per day without bankrupting the user or the platform.

We log this data into a custom usage-tracking table in Supabase, giving us a real-time view of our unit economics. As a small team, this data is vital for our sustainability. It allows us to perform rigorous “Cost vs. Quality” audits. If we notice that a new, cheaper model provides 99% of the accuracy of a more expensive one for alt-tag generation, we can switch the registry and instantly improve our margins without sacrificing the customer experience. It is about being a smart consumer of intelligence, treating tokens as a raw material that must be managed efficiently.

Our tracking system provides several key metrics that we use to optimise the engine:

- Cost per SKU: The average cost to fully enrich a product across all languages, SEO metadata, and attributes.

- Model Success Rate: Which models are triggering the most fallbacks or retries, helping us identify instability early.

- Token Efficiency: How well our prompts are performing in terms of output quality vs. token length.

- Tenant Usage: Granular billing data for our enterprise clients, ensuring transparent and fair pricing based on actual compute.

This data-driven approach allows us to participate in the AI market as “liquid buyers.” We aren’t locked into high-cost legacy contracts; we move our volume to where the value is highest. This allows merchi.ai to offer a million-product-per-day capacity at a fraction of the cost of manual merchandising teams or less-optimised AI wrappers. We are passing those savings directly to our customers, making automated merchandising accessible to retailers of all sizes. It ensures that the “unsexy” work of data entry doesn’t just get faster, it gets cheaper and more accurate every single day, creating a sustainable path for retailers to compete in the agentic era.

By analysing this data over time, we can also predict future costs and advise our customers on how to best structure their Writing Knowledge configurations for maximum efficiency. If a brand uses overly verbose brand voice instructions, we can show them the exact token cost of that verbosity. This transparency builds a different kind of relationship with our clients; one based on shared efficiency and technical clarity. We aren’t just a service provider; we are a partner in their digital transformation, helping them navigate the complex economics of 2026 e-commerce.

Conclusion: Building for the Future of Retail

Mastering the model landscape was just the beginning. The real magic of merchi.ai happens when we take that raw AI intelligence and apply our proprietary Writing Knowledge system. By architecting our “Brain” as an anti-fragile, gateway-driven engine, we have created a foundation that is as resilient as it is intelligent. We have removed the risk of model lock-in, solved the problem of provider downtime, and mastered the economics of high-scale AI. This foundation is what allows us to process 125 years of human labour in a single day, ensuring that retailers can keep up with the demands of the 2026 marketplace.

We are building merchi.ai to be the backbone of the agentic e-commerce era. Our commitment to these “under the hood” technical deep-dives is what allows us to deliver on the promise of automated merchandising that actually works at scale. We don’t just use AI; we manage it as a high-leverage commodity, ensuring that our customers always have access to the absolute cutting edge of what is possible in 2026. This technical discipline is what allows a small team to out-innovate larger, slower competitors who are still struggling with legacy integrations.

In the next chapter, we will go deep into the Writing Knowledge system itself, the logic that takes raw AI output and polishes it into professional, brand-aligned merchandising gold. We will show you how we move from “generic AI text” to high-converting masterpieces that sound exactly like your best senior copywriter. We’ll explore how we teach an AI to understand the soul of a brand, ensuring that every description, attribute, and styling tip is culturally nuanced and perfectly aligned with your business goals.

Ready to see how our anti-fragile AI architecture can scale your retail operations? Book a Demo or Start Automating with merchi.ai.